Marc Loftus: Hi, I'm Marc Loftus, editor of Post, and I'd like to welcome you to the third in a series of three podcasts sponsored by Dell Technologies. Once again, I'm joined by Alex Timbs and Jason Lohrey, both of whom have considerable expertise in the space. Alex Timbs heads up business development and alliances for media and entertainment at Dell Technologies. Welcome back, Alex.

Alex Timbs: Marc, thanks so much for having me back again.

ML: Great to have you. Jason Lohrey is the CTO of Arcitecta which has created its own comprehensive data management platform called Mediaflux. Welcome, Jason as well.

Jason Lohrey: Thank you, Marc. It's great to be back.

ML: Good to have you guys again. I am looking forward to the conversation. I just want to recap for our audience some of the topics that we've talked about over the series. This is our third episode in this podcast series. In the first episode titled 'The Era of Data Orchestration', we looked at some of the problems facing content creators and the media entertainment space. In our second episode, we took a look at how automation can help streamline media and entertainment workflows. And today, in episode three, which were titling 'The Rise of Cyber and Ransomware', we're going to look at some of the risks facing media and entertainment pipelines and how data can be better secured. Jason, you've authored another article for Post and I'm going to include the link to it in the description for this podcast so that our audience can check it out. It addresses a lot of these concerns and a couple of interesting points about who's responsible for the security, where it starts, and one interesting idea. That is, unless you're keeping your content in a locked box somewhere, everybody has to worry about this. We don't have that ideal situation where it is locked up and it's secure and nobody has access to it. With these collaborative workflows, there's so much more risk these days. So where do we want to get started?

JL: I wouldn't mind answering that point. I should dovetail back in with that point about the locked box.

ML: Yeah, go ahead. Let's talk about that because in the past, you know, there was a lot more control as to who has access to it. Maybe it was only one facility that was working on it and drives were being sneaker netted around a facility where they're actually carrying pieces of media. But that's not the case anymore with these networked workflows. So what are your thoughts?

JL: Well, clearly, the safest way to keep something is in a locked box and make sure that it's guarded, and there are no electronic connections to those. But we don't live in that world anymore. There are many advantages in a connected world, one where we use the Internet to transmit data around the globe at speed, that connects people and systems together. We've almost got real time collaboration. We can't go back to those days without this interconnectedness. We've got locked boxes, but we need to really improve the systems that we've gotten to protect our data.

ML: One of the points that's brought up in the article, again, Jason, is that this isn't an I.T. issue. I think a lot of people have the perception that, “I'm a content creator, there's somebody behind the scenes that's managing and securing all this”. But that's not not the case. It's not the I.T. Department's sole responsibility. Everybody really has some kind of role. And you have to identify what and where some of these risks are.

JL: Well, I.T. does have a position to play because they look after the systems and look after those things that sit below the waterline, making sure those are functioning correctly. But all of us have a role to play. Humans are fallible. Those are very common vectors for penetrations into into systems through email or scamming, phishing, etc. Those often go through the weakest link, humans. We need systems to be keeping an eye on those as much as possible to help back the people up. But we also need to train our people to make sure they are continuously aware of what's going on. This is a game that is going to run indefinitely. If you have something somebody else wants, they're always looking to gain a point of leverage and exploit it to their advantage. They're not going to give up. We're going to be doing this and reacting to the changes in the environment and the way in which people find exploitations for decades, if not centuries, to come.

AT: Yeah. Look, it's definitely not going away. I think, Marc, you touched on something that rings very true to me

based on my experience in the industry, that it's not an I.T. problem. As Jason said, I.T. plays a huge role in

mitigating risk within the business, both physical and digital. However, cyber really needs to be a whole of

business problem, and it's too often characterised as a bottom up sort of technology driven initiative where

production is prioritised over all other business activities. The consequences of that approach ultimately lead to

poorer business outcomes, which is really embodied in the lack of business strategy and awareness, and some of the

superficial approaches to risk management. So, it really needs to come top down and be looked at as a cultural

shift, a fundamental cultural requirement.

I might just jump back to some of the drivers as to why that's so important now. Before the pandemic and now as a

continuing trend, we had a significant shift to support remote workflows, to really to give access to resources,

both human and infrastructure, where it made sense such as tax incentive reasons. So that was already in motion, but

it really didn't have a lot of gravity. COVID-19 obviously forced that. Facilities had to embrace these new

paradigms, including supporting everyone working from home and adopting these new workflows which included, SaaS

(Software as a Service) and PaaS (Platform as a Service) offerings. So there was this really seismic shift to

dynamic workflows that were accelerated by COVID and lockdowns. So that meant that the time to market, or time to

get a solution, was really short for most businesses, particularly the strategic decision making about how to get

people working from home. So there was this avalanche of projects to pull everything apart and try to support it

anywhere. These rapid changes, without the time to plan and test preperly, have created a lot of additional attack

services which could potentially facilitate the loss of content, the intentional destruction of content, or

misappropriation, misuse of content. All of that is combined with the massive amount of demand for content,

shortened delivery times and fairly tight margins. So there's a lot of factors in play to create this perfect storm

where companies have increased risk. And I haven't seen, personally, the same level of response to that increase

risk, i.e., I haven't seen companies investing enough in cultural changes within their business to mitigate that

risk.

ML: One of the points of risk that was brought up, Jason, I think your article touches on it, that risk isn't something that you look at once and say, we've got this figured out. It's something that needs to be continually observed to see where these risks are and what may have been a risk, you know, at one point has become more of a risk or something new as popped up. It's not something that is just a steady threat. It comes from many different areas.

JL: That's right, Marc. We're not going to have a threat today or tomorrow, and that's the end of it. We're going to have a threat every week, every day, every hour and possibly every second at some time. We've got to be on our game here and looking at the systems as a whole. That is both people and technology. We need vendors and industry to work together to make sure that they can go where they need to. They might go back to a first principles approach to change the playing field, to thwart efforts by others, to steal content and to hold it hostage. I think in a lot of ways we're often adding Band-Aids to existing systems and approaches to plug holes. I don't think that's right for the long term, we've got to be playing two strategies. We've got to plug those holes, but we also need to be taking a long-term view and seeing if we can fundamentally shift the paradigms that make it harder for others to exploit what we're doing. There's an interesting analogy in the banking sector. We all know about credit card fraud. It's a very simple remedy to a lot of credit card fraud apart from the online fraud. But if you put a photo on a credit card, that will obviate a lot of fraud. But people don't want to do it because the cost of someone going to get their photo on a card. So the banks absorb the cost and have systems that sit on the side to look at what's going on and stop this in the middle while it's happening or post-hence. So we have to evaluate what we're prepared to do to stop the cyber criminals from gaining advantage. And sometimes we may not want to do things that we could do that would stop them in their tracks because it's too costly, and we might accept certain levels of risk. And so it's completely a risk evaluation problem.

ML: Jason, can you explain how Arcitecta fits into the workflow. For example, “I'm a facility that has my own storage and I'm working with other facilities that have their own storage”. Maybe it is Dell, maybe it's other manufacturers solutions. Where does Arcitecta come in from an installation or a licencing, what is the model there? How do you actually take advantage of it if you're a facility that doesn't already have it?

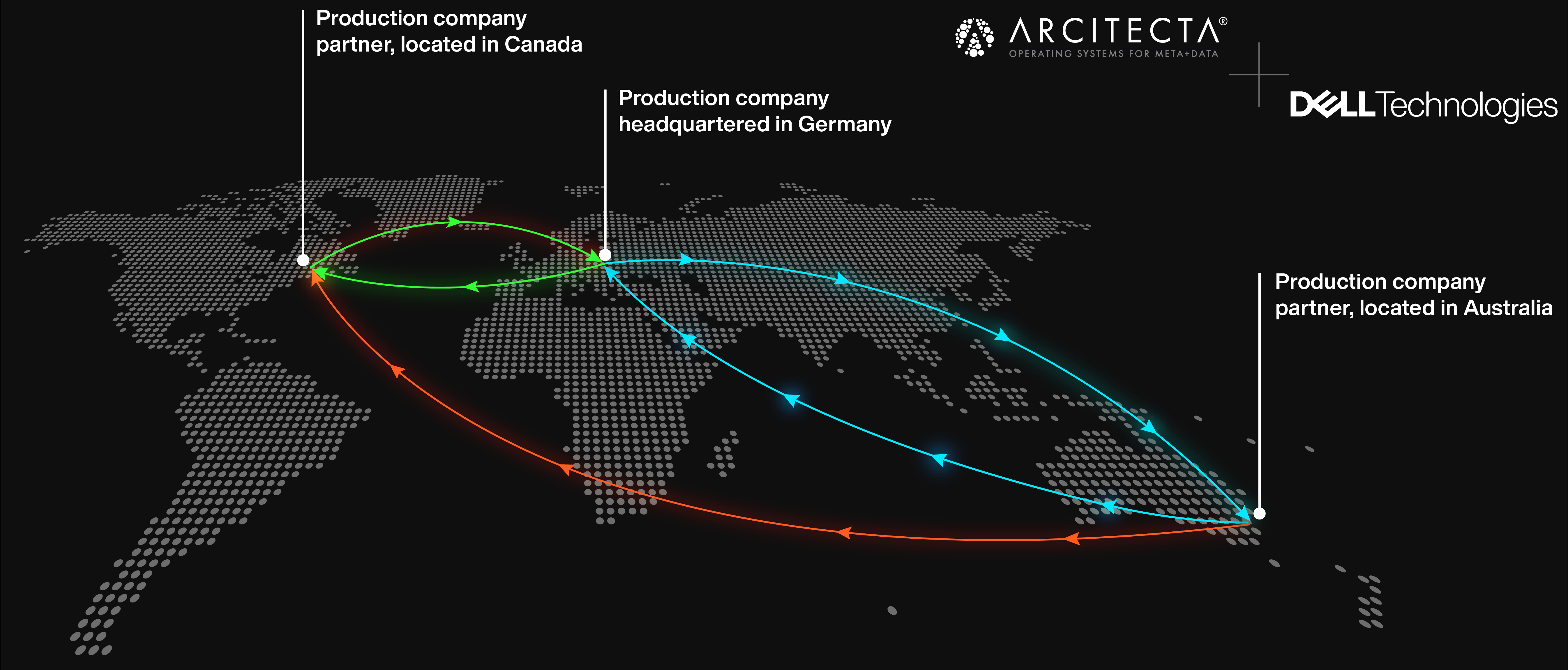

JL: So there are two ways to do this. We can sit off to the side, that is out of band, and we can scan any kind of storage that presents as file, object, tape, etc. But the best place for us to be positioned is in band where we are the file system, where Mediaflux is in the data path, because we can see every change the millisecond that it happens. So someone saves a file and changes the state of it, we can be transmitting it from one place to another. In other words, it gives us an opportunity to parallelise the data pipeline. A really good example of that is you've got two sites, a geo-distributed workflow. Someone changes the state into a certain point in their process at site A. The moment we see that, each change could be transmitted to site B. Whereas traditionally you would wait until the entire process is completed at site A and then transmit everything you need. So that's a sequential process that when we're in the pipeline, in the data path, we can orchestrate these workflows the moment a trigger occurs. So really, Marc, optimally, we are in the data path, but people often start out by putting us to the side and we can scan and start managing data so we can check in, check out, once we've got some visibility of the data, we can then put these processes on top of, and we can reproject that data out through the front door. So even though we're scanned on an external file system or some sort of storage device, we can reproject that through another protocol elsewhere.

ML: Alex, you had a couple of ideas about how this can be combated. One of those was with chain of custody. Is that something you would want to touch on?

AT: Absolutely. In this industry, the most valuable thing from a business perspective, apart from your trusted employees, is the data itself. That is because its the data that represents that creative endeavour, the investment or value, and that's generally what is most sought by people that have some malintent towards you. So really, it's about controlling and managing the risks associated with that data. Businesses really need to look at how they're managing their data, which includes asset management or, for a better use of description, metadata management. Metadata can answer questions such as what is it? How is it classified? How valuable is it? Where is it currently? Where has it been? Who should have access to it? Etc., etc.. Importantly, in this geo distributed world that we find ourselves in now, we should be thinking about only moving the data to the process or the individual as it's needed, and then potentially getting rid of that data, or deprecating it, or having a time to live on that data, once that process is complete. And that can be done really well with an asset management/metadata management approach. There are a whole lot of technologies that can deliver that data rapidly to where it needs to be. But, as we've covered in previous podcasts, you can only do that through automation because the size of the data, is humanly impenetrable now. Animated films, or VFX for that matter, are now multi petabyte scale with hundreds of millions, probably soon to be billions of files. It's impossible for a human to manage that amount of information, to know where that should be, who should have it, who's actually accessed it, and so on. So you need to have these automated systems in place to do that for you. Obviously, you need to have an idea about what those connections are and who should have it and who's who, because system is not going to do that for you. If you're not already heading down that path, you need to start having a conversation at that level about metadata and asset management.

ML: Interesting point you brought up there. I think it was about the was it the lifecycle or time? Where some assets, are only available for a certain amount of time, and after that, you're kind of closing the door on it so that they're not just hanging out somewhere they shouldn't be.

AT: This industry is built on iterations, right. It's about creative distillation. The more iterations you can do of a piece of creative work, the more likely you are to distil a better output. And certainly as directors, and art directors, their job is really to continue improving and refining things. So they need to see lots of different versions of it, tens, hundreds, sometimes thousands of different versions of a particular asset, and you do that through automation. This generates a lot of different versions and it rapidly deprecates data. So what you don't want to do is have that deprecated data not managed. You don't want to have it lying around on different systems or in people's homes just because it wasn't used. So I just wanted to add that, that this industry in particular generates a lot ofthat ends up on the cutting room floor, the stuff that didn't make it into film, there's a lot of that digital debris that is left around and that needs to be managed just as much as the current valuable assets.

ML: Good point. When you talk about iterations, how something evolves over time, you wonder are those prior iterations that have been saved somewhere? What happens with them? Obviously your problem from the production standpoint, you may be thinking, hey, we're done with that, that's something old. It's not something that I need right now, but it's still an asset that needs to be tracked and secured.

AT: Just because an asset's been deprecated at that point in time, it doesn't mean that there may be a creative pivot later on in the film, to reuse an asset that was done 12 months ago. So it may be deprecated, it may not be valuable at the time, but you need to be able to have the ability to bring that asset back, at short notice, if needed.

JL: The shorter the time these things live, the smaller the attack service surface is. So, it's harder for someone

to get hold of it. So if things are left lying around, there's a greater chance that people will find those or

penetrate the systems that hold those.

The other benefit of metadata is that it actually gives you a sense of what is normal. Things are flowing, and if

you know what normal is, you can detect abnormal. It's really important to be able to track the complete provenance,

and auditing and know what should be going, where and when. When it gets outside of those swim lanes, if you like,

you know that's abnormal and there's something wrong going on. When you've got so much data, and so many systems and

processes that it is very hard for humans to keep track of, it's not within their ability to see when small things

are going wrong. We need the systems to help us identify cracks. And when people are gaining entry.

ML: That might bring us right to Arcitecta and some of your solutions there, Jason. Do you have, within your technology, alerts that would point out something that is unusual in, like you said, staying within your lane when it comes to creating content? Is there something where you would say, hey, this is unusual, these files should not be available here or whatever, that you would alert somebody within the production to an anomaly?

JL: When you're in the data path, there's an incredible amount of metadata that's available. Where things are coming from, who's who's doing what, when they do it, the cadence between them doing things. The automatic extraction of metadata, provides information for forensic analysis and for patterns of life and prediction of behaviour. So the first job that you need to do is to capture as much contextual information as possible. If you just had a file and you didn't know who produced it, or where it came from, it's not as valuable as knowing where it has come from, and what its purpose is, and where it should be going to. So it should be going from A to B, and then all of a sudden you see it going to C, that's not normal. You should do something about it. So, yes, we can. Once we've got the metadata in the system, we can put surveillance on that to monitor for abnormal behaviour. There are also other things that you can do as well. We should have systems that prevent people from deleting things that they're not entitled to delete. We need to incorporate the use of multifactor into our systems. We need to look at whether we use things like WORM, write once, read many. And when we do that, we've got to make sure that we don't lose the things that are costly to acquire. But also in this industry, everything we produce is intellectual property and that, in it's own right, is exploitable. So we've got to make sure that people are not getting access to that in the first place.

ML: Would that fall under the category of analytics? That all this metadata is being analysed and therefore it would point out some discrepancies in what a predictable workflow might be?

JL: That's right. There is no single solution that protects the entire space. We need multiple lines of defence. We need the last line of defence that we can rely on, just in case everything else falls over. But we've got to be going up the stack so we get leverage as many first lines of defence we can. The analytics are not the first line of defence. They're a second and third line of defence because something is already happening, and we're analysing what's happening, but we're trying to stop that before it wreaks too much havoc and we can follow up. So we absolutely need those, but we also need to look at systems that disable the attack in the first place. For example. if someone's attempted to crypto lock all of your data, how can we stop them from doing that in the first place? Well, multifactor is a very interesting way to stop that. Or making sure that if that does happen, that we've got ways of reverting what's happened as easily and as quickly as possible.

ML: Alex, when it comes to cyber and ransomware, is there anything Dell is doing specifically to address these with its technology or in parallel with Arcitecta?

AT: Yeah, absolutely. So one of the primary things that we did that's Media and Entertainment (M&E) focussed is we got validated architectures with the trusted partner network (TPN). So we engaged a TPN auditor and we went through everything from our physical security within Dell itself, just to test out and apply the same brush to ourselves as what our customers would have to face. We also had the auditor have a deep look at OneFS, the operating system for our storage, and audit that from an access control perspective from a hardened perspective. And we also did some architectural designs, that would be very common in media and entertainment such as network isolation or segregation, and input/output (I/O) to test how we get data in and out of the building in a secure manner. We made sure that those designs were aligned with the TPNs expectation, which is an industry body that just about everyone in M&E would know. We also did some initial governance and policy documents that our customers could use if they were facing an initial audit. This was aimed at trying to reduce the friction associated with, and some of the fear and anxiety around, getting audited and trying to design an environment or address issues within an environment. So a lot of our customers received that very well and quite excited by that. We continue to be engaged at that level and try to stay abreast of all of the governance and expectations there and some of the things that would occur in an audit, including those associated with hybrid or cloud environments. We obviously have our own significant capabilities within Dell from a consulting perspective. I suppose the third thing I would mention would be partnerships such as that with Arcitecta, and others, which have a range of different solutions that are addressed at specifically mitigating the risk within M&E as it relates to data and content.

ML: Working with those trusted partner networks gives confidence to your customers then, knowing that they've met a certain level of standards and that when they are working on a project where they're collaborating they know that they have been essentially vetted, that there's been precautions taken in place through the technology and practises.

AT: Yeah. It also means that we go through enablement internally. For example, it means that our sales teams can use the language that the customer understands and they actually understand some of the challenges that our customers are going through that are industry specific and can help bring them solutions or potential solutions to those problems because of that understanding, or that vocabulary that they have.

JL: I also think people need to revisit the way in which we view this problem. We've had file systems for a long time, and then we got cyber criminals doing things in those file systems. So we added mechanisms to try and stop that. But we didn't change the file system. We just kept the file systems that we had and we've bolted things on. We've taken a first principles look at that and said: “How can we stop things happening at the front door, but still keep a file system if we want to.” That said, there's no hubris here. We're not going to say we can stop everything at the front door, that would be a very dangerous position to be at, because this is a game. Everybody's trying to gain advantage. But we will find that we've got an ability to add tooling, which gives a first line of defence for ransomware. What people don't realise is the advantage of a faster internet and faster interconnect for them is also an advantage to the cybercriminals because they can launch attacks faster and from many different vectors. So the speed at which this is happening is increasing for everyone. So we're all going to be on our toes.

ML: Good points. Alex and Jason, I want to thank you once again for participating in this podcast and wrapping it up with some interesting perspectives here. I'm going to share this with our audience. I also encourage them to check out the link provided to check out Jason's article on this topic The Rise of Cyber and Ransomware. And gentlemen, thank you again for your participation.

AT: Thanks so much for having us.

JL: Thank you very much, Marc, it's been an absolute pleasure.

To read the accompanying article “The Rise of Metadata, Global Namespaces and Orchestrated Workflows” written by Jason Lohrey, the CTO of Arcitecta, visit this link.