It’s no secret that big data and the sheer volume of data are transforming every industry on the face of the globe. In fact, since you started reading this sentence, it is estimated that about 2 megabytes of new information has been created for every human being on the planet.

Geospatial has always been the biggest of big data environments. And if you believe even a fraction of the hype around smart sensors, mobile, and autonomous platforms, then in the near future it is easy to believe that volumes will rapidly grow beyond the exabytes that these companies already collect.

As universities and research cohorts move towards petabyte institutions, looking further afield and transplanting innovative solutions from other industries has the potential to be a very valuable strategy.

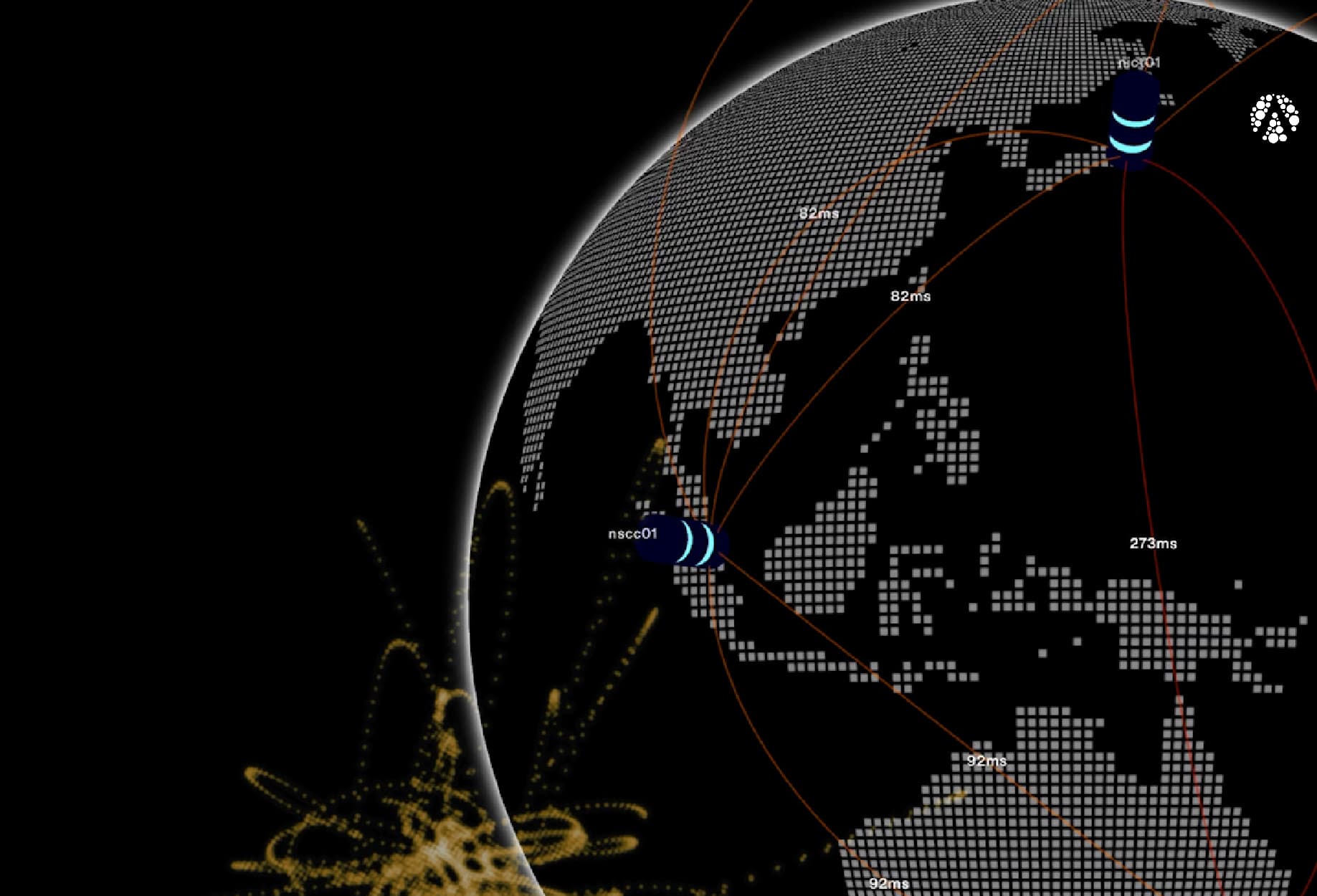

DigitalGlobe is a geospatial imaging company that is operating the most agile and sophisticated commercial satellite constellation in orbit – and moving and storing huge amounts of geospatial data each month.

DigitalGlobe has an archive of over 40+PB for satellite imagery stores; spanning on-premise disk (Isilon, commodity disk), tape (HPE DMF) and cloud (Amazon S3, Amazon Glacier). Clustered on a Mediaflux configuration with 13 cluster nodes, we have clocked around 100 million files moving at 65TB an hour.

Mediaflux provides core data management and workflow functions for the entirety of DigitalGlobe's commercial imagery archive. Arcitecta plays a crucial role in the DigitalGlobe data management ecosystem. The Mediaflux cluster manages petabytes of data, ingesting around 70-100TB of new data per day. The DigitalGlobe Mediaflux system currently moves up to 3.5PB (and growing) of data between storage tiers per month.

Turner Brinton, Sr. Manager, Public Relations, DigitalGlobe